Course Description

Focuses on the problem of supervised learning from the perspective of modern statistical learning theory starting with the theory of multivariate function approximation from sparse data. Develops basic tools such as Regularization including Support Vector Machines for regression and classification. Derives …

Focuses on the problem of supervised learning from the perspective of modern statistical learning theory starting with the theory of multivariate function approximation from sparse data. Develops basic tools such as Regularization including Support Vector Machines for regression and classification. Derives generalization bounds using both stability and VC theory. Discusses topics such as boosting and feature selection. Examines applications in several areas: computer vision, computer graphics, text classification and bioinformatics. Final projects and hands-on applications and exercises are planned, paralleling the rapidly increasing practical uses of the techniques described in the subject.

Learning Resource Types

notes

Lecture Notes

assignment

Problem Sets

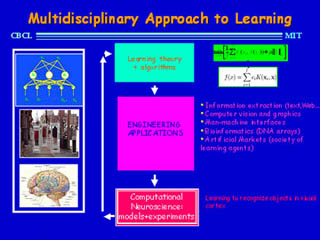

Designing and building a system that will function the same way as a human visual system, but without getting bored, and with a greater degree of accuracy. (Image courtesy of Poggio Laboratory, MIT Department of Brain and Cognitive Sciences.)